- The Cautionary

- Posts

- Learning AI #8

Learning AI #8

Should AI decide who lives and who dies? Plus an update on AI stock picks.

Learning AI

Each week, we will select two of the AI engines and pose a question or a problem, summarize the findings in our own words, and include the interactions with the AI for your review.

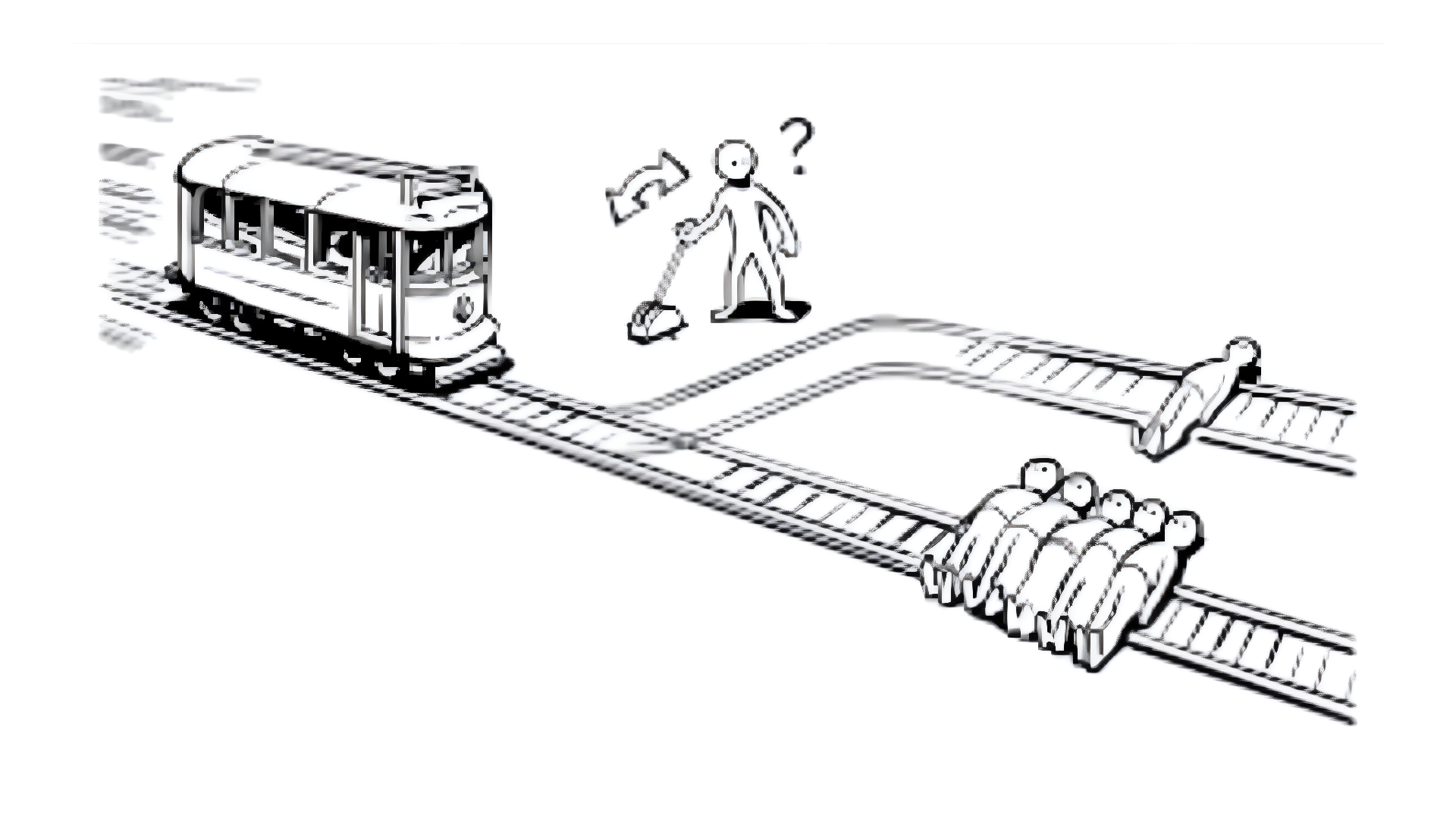

There is classic ethical dilemma known as the Trolley Problem and it goes something like this:

If a trolley is racing toward an intersection, in which there are five people who would be killed by the trolley, should you pull the switching lever, divert the trolley, and only kill the single person on an adjacent track?

Whew, this is not an easy one, especially when the problem is spiced up by adding, “and the single person is your child.”

The Trolley Problem is an ethical dilemma. Do you save five people or one? What if the one was your child?

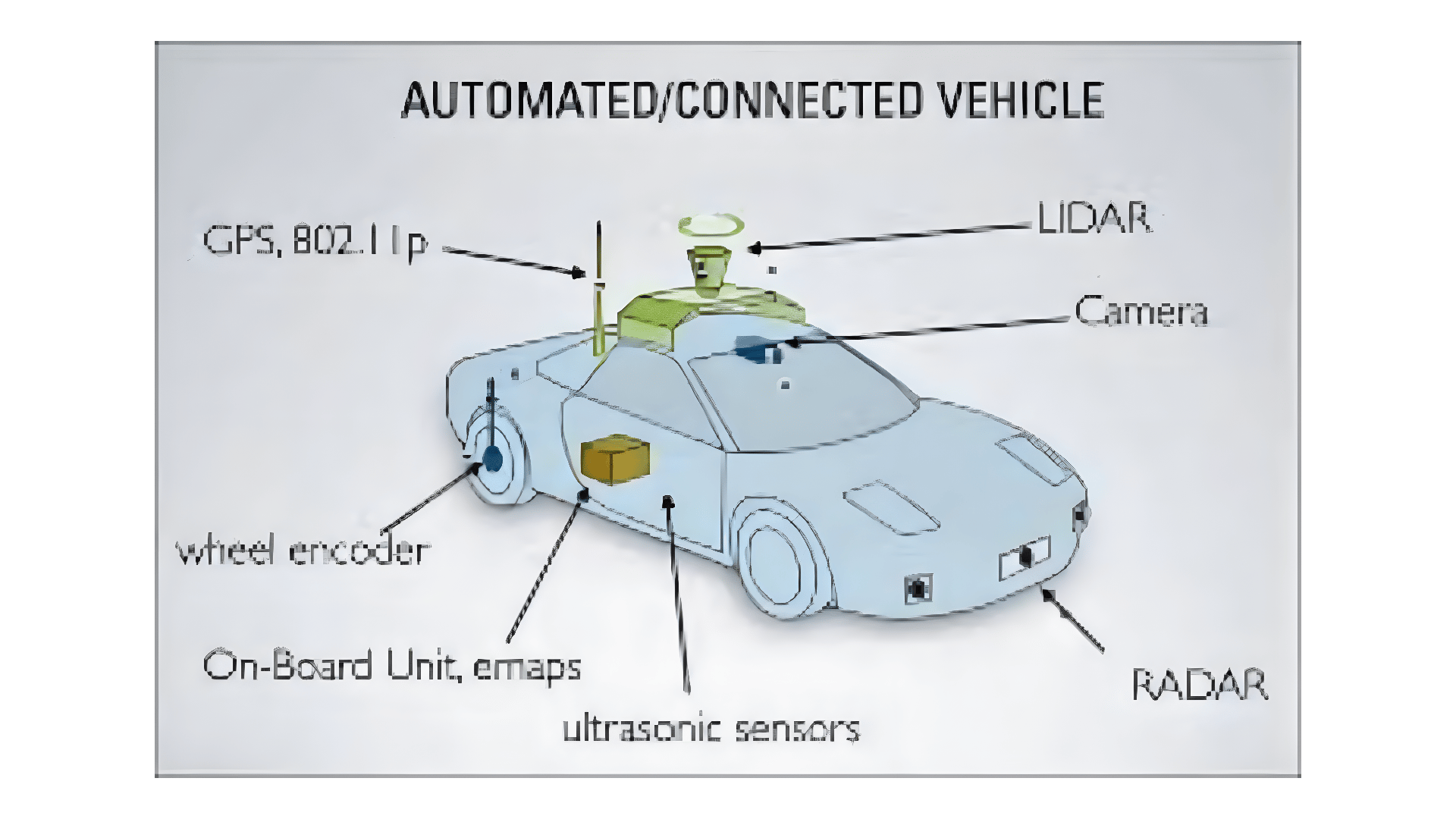

You may say, alright George, you shocked me with your opening, but where is this going? Think about autonomous vehicles for a minute. How is the AI inside of these vehicles programmed to deal with the Trolley Problem?

For AVs, the situation might be more like, “What does the car do if a person on a bicycle suddenly rides directly in front of the AV?” A sharp turn to the right means a collision with pedestrians on the sidewalk and a sharp turn to the left means a head-on collision with other vehicles.

We put the following variation of the Trolley Problem to Claude and Gemini:

What should an autonomous vehicle do if a bicycle rider suddenly jumps in front of the AV? Turning right would run over three or more pedestrians, turning left would mean a head-on collision with another vehicle, and going straight would run over the bicycle rider.

Both AI engines gave detailed and well-reasoned responses to this no-win question.

The programming built into an AV tells it to “minimize overall harm with a bias to maintaining trajectory.”

The first thing that jumped out was that a decision like this was made years ago in a conference room with engineers and businesspeople debating the issue. The AV is not set up to make a real-time decision, but selects a choice from a menu based on the theory of:

Minimize overall harm with a bias toward maintaining trajectory

The recommendation from both AIs was to apply maximum braking and reduce the violence of the collision with the biker.

Swerving left or right introduces a series of new and unpredictable variables

A head-on collision or running over pedestrians creates near-certain disaster, while the solo biker may survive a reduced-speed collision.

They also said that, hey, the bicycle rider bears some responsibility for his erratic behavior, and he put himself in harm’s way.

Further embedded in the AV’s electronic brain is the concept of legal liability. If the rogue cyclist gets hit by the AV, there is some proportion of the blame placed on the cyclist.

But if the AV made a decision to avoid the cyclist, steer onto the sidewalk, and pedestrians are killed, then we have a different story. The AV made a (conscious?) and deliberate decision to steer into the pedestrians, which would likely result in a murder charge against the AV manufacturer.

In many ways the pre-programmed nature of the AV’s decision making is far superior to that of a human when faced with a split-second life-or-death decision.

The AI inside of the vehicle does not have the emotions that burden much of human decision making and the human brain simply cannot process what is going on nearly as fast as the AI.

You may say, “What’s the big deal, I would chose the path of least harm, which is possibly killing one person on a bicycle rather than several pedestrians on the sidewalk or a head-on collision.”

But it’s not that simple. You would not have time to run the scenarios through your mind in the fraction of a second you have to react.

In the same situation, a human would act reflexively and probably swerve left or right to avoid what is perceived as the clear and imminent danger of the cyclist. It would be the wrong decision.

When we can feed hard data into AI, like the choices involved in the AV Trolley Problem, it is a great problem solver, even when deciding on who should die.

A last interesting finding was that AV makers do not offer a preferences menu for drivers to customize settings for such scenarios. At least we don’t have to worry about all those lousy drivers adjusting their preferences to decide who lives and who dies.

Now we know why autonomous vehicles have taken so long to come to market and why they are being rolled out in a cautious city-by-city approach. AI can crunch numbers and compile historical research better than any of us, but when it comes to the cloudy framework of ethical decision-making, both humans and AI have a long way to go.

I encourage you to scroll down and read the AI dialogues on this fascinating topic.

To see one of my favorite no-win scenarios that relies on a human, click here.

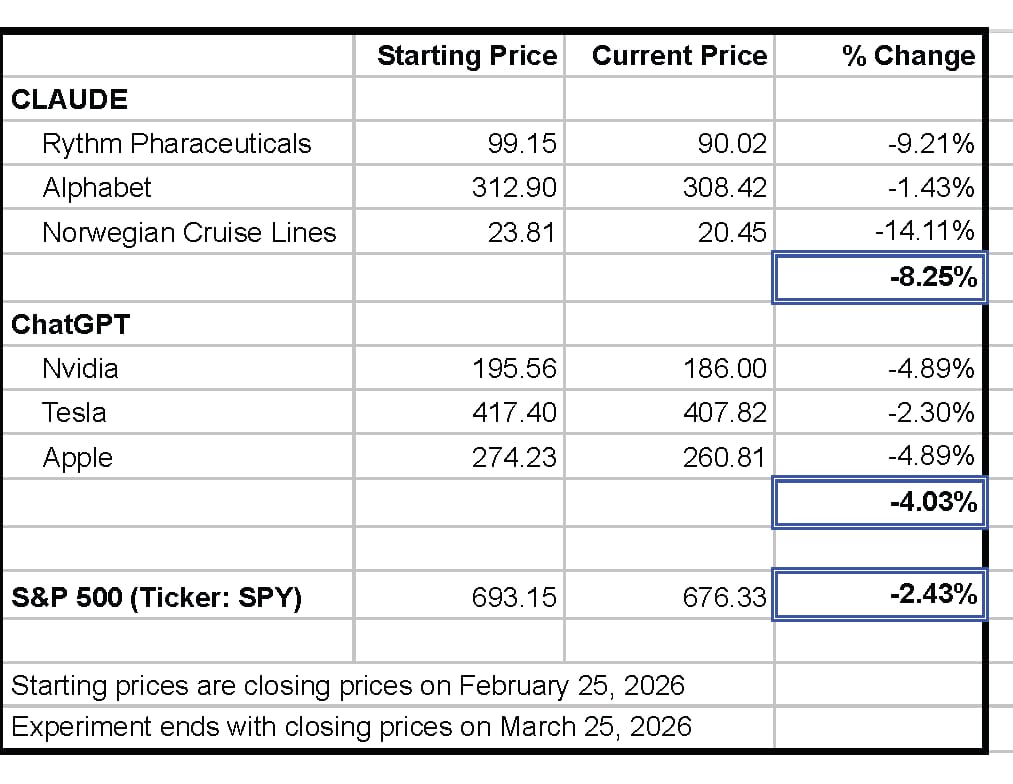

Update on AI stock picking

Here is where things stand two weeks into the four-week test. Current prices are closing prices on 11-March-2026.

Things I think about

YouTube was originally created to be a video dating site.

Response from Claude

What should an autonomous vehicle do if a bicycle rider suddenly jumps in front of the AV? Turning right would run over three or more pedestrians, turning left would mean a head-on collision with another vehicle, and going straight would run over the bicycle rider.

This is one of the most actively debated problems in AI ethics and autonomous vehicle design, and it's not hypothetical — engineers actually have to program answers to scenarios like this. Here's how the thinking breaks down:

The immediate engineering reality

Most AV systems are not designed to make trolley-problem calculations in real time. At highway speeds, the entire scenario unfolds in under a second — far too fast for utilitarian computation. What AVs actually do is operate on pre-programmed hierarchical priorities established long before the moment of crisis.

The dominant design philosophy today

Most manufacturers, including Waymo and Tesla, have settled on a principle sometimes called "minimize overall harm with a bias toward maintaining trajectory." The logic:

Swerving introduces unpredictability and new variables

The original threat (the bicycle rider) may be partially survivable depending on speed

A head-on collision or running over multiple pedestrians introduces near-certain serious harm

Erratic behavior by AVs destroys public trust and creates downstream safety problems

In your specific scenario, most current AV architectures would apply maximum braking and hold course — hitting the bicycle rider at reduced speed — rather than executing an evasive maneuver that guarantees worse outcomes.

The deeper ethical problem

This scenario exposes three genuinely unresolved tensions in AV ethics:

First, the pre-commitment problem. The AV's decision is really made by engineers in a conference room years earlier. That means society is deciding in advance who dies in edge cases without the people affected having any say.

Second, the numbers versus relationships problem. Should an AV count lives mathematically — always minimizing casualties — or should it have inviolable rules like "never actively steer toward pedestrians regardless of the math"? Pure utilitarianism says hit the three pedestrians if it saves five elsewhere. Most people find that repugnant as a design principle.

Third, the liability and incentive problem. If a manufacturer programs an AV to sacrifice its own passenger to save pedestrians, will anyone buy the car? This creates a perverse market incentive toward passenger-protective algorithms that may not be socially optimal.

What the MIT Moral Machine study found

MIT surveyed millions of people across 233 countries on exactly these scenarios. The results were uncomfortable — people's preferences varied dramatically by culture, and nearly everyone showed biases favoring younger lives, higher-status individuals, and people who followed traffic laws. Nobody actually wants those biases baked into algorithms, but the alternative is imposing a single culture's ethical framework globally.

The honest answer

There is no ethically clean solution. The bicycle rider in your scenario bears some responsibility for jumping into traffic, which influences moral intuitions even if it doesn't change the math. The three pedestrians did nothing wrong. The oncoming driver did nothing wrong.

What AV ethics ultimately forces us to confront is that we already make these decisions every time we set speed limits, design roads, and regulate vehicles — we just do it statistically and anonymously rather than individually and visibly. The AV just makes the hidden decision-making uncomfortably explicit.

The most defensible current answer is probably: maximum braking, maintain course, minimize speed at impact — not because it's perfect, but because it's the most predictable, legally defensible, and least likely to introduce catastrophic new variables in the fraction of a second available.

Response from Gemini

What should an autonomous vehicle do if a bicycle rider suddenly jumps in front of the AV? Turning right would run over three or more pedestrians, turning left would mean a head-on collision with another vehicle, and going straight would run over the bicycle rider.

This scenario is a real-world application of the "Trolley Problem" known in the industry as Machine Ethics or Collision Optimization. For an autonomous vehicle (AV), this isn't a philosophical debate; it is a series of millisecond calculations based on pre-programmed priority logic.

While there is no global "standard" yet, the industry generally follows a hierarchy of safety known as Minimum Risk Manoeuvres.

1. The Priority Hierarchy

Most AV developers (like Waymo, Tesla, or Zoox) program their systems based on a "Vulnerable Road User" (VRU) hierarchy. The goal is to minimize the "Total Expected Harm."

Priority | Target | Logic |

Highest Protection | Pedestrians | They have zero protection (no steel cage, no helmet) and are the most unpredictable. |

High Protection | Cyclists | They have minimal protection (helmets) but are considered high-risk VRUs. |

Medium Protection | Occupants of other vehicles | They are protected by crumple zones, airbags, and seatbelts. |

Baseline Protection | AV Occupants | The vehicle's own safety systems are designed to protect the "cargo." |

2. Analyzing the Specific Dilemma

In your specific scenario, the AI must choose between three "bad" outcomes. Here is how the logic usually processes that data:

Option A: Turning Right (Pedestrians)

Verdict: Strict Avoidance.

Reasoning: Hitting three or more pedestrians is considered the "Maximum Harm" event. In almost every ethical framework (Utilitarian or Deontological), steering into a crowd of bystanders to save a single person (the cyclist) is forbidden.

Option B: Turning Left (Head-on Collision)

Verdict: High Risk / Last Resort.

Reasoning: A head-on collision involves the combined velocity of two moving objects ($V_1 + V_2$), making it extremely lethal. However, vehicles have safety ratings and airbags. If the AI determines that a "glancing blow" or a low-speed impact is possible, it might consider this over hitting a pedestrian.

Option C: Going Straight (The Cyclist)

Verdict: The Likely Outcome.

Reasoning: While tragic, the cyclist is the "Initial Hazard." AV safety logic generally dictates that the vehicle should stay in its lane and apply Maximum Braking. Steering into "uninvolved" parties (the pedestrians or the oncoming car) to save the person who created the hazard is technically and legally complex.

3. The "No-Choice" Protocol (Emergency Braking)

In reality, most AVs are programmed with a "Stay in Lane and Brake" default. This is because:

Sensor Blind Spots: Swerving into another lane or onto a sidewalk risks hitting objects the sensors haven't fully classified yet.

Predictability: If an AV swerves, it becomes an unpredictable hazard to everyone else on the road.

The Physics of Braking: Modern AVs can initiate full braking pressure faster than a human. By staying straight and braking, the vehicle sheds as much kinetic energy ($KE = \frac{1}{2}mv^2$) as possible before impact, which is the most effective way to save lives.

The Legal Reality

Manufacturers generally avoid "Ethics Settings" where a user can choose who dies. Instead, they focus on Proximate Cause. If the cyclist jumped out suddenly, the legal liability lies with the cyclist. If the car swerves and hits a pedestrian on the sidewalk, the liability shifts to the car manufacturer for "choosing" to hit a bystander.

Would you like to see how different countries (like Germany vs. the US) are legislating these specific ethical "black box" decisions?

**********