- The Cautionary

- Posts

- Learning AI #16

Learning AI #16

AI, Iron Dome, and lethal force decisions

If a rocket is launched from Gaza toward an apartment building in Tel Aviv, you have between 15 and 90 seconds to decide whether to shoot it down. AI makes that call.

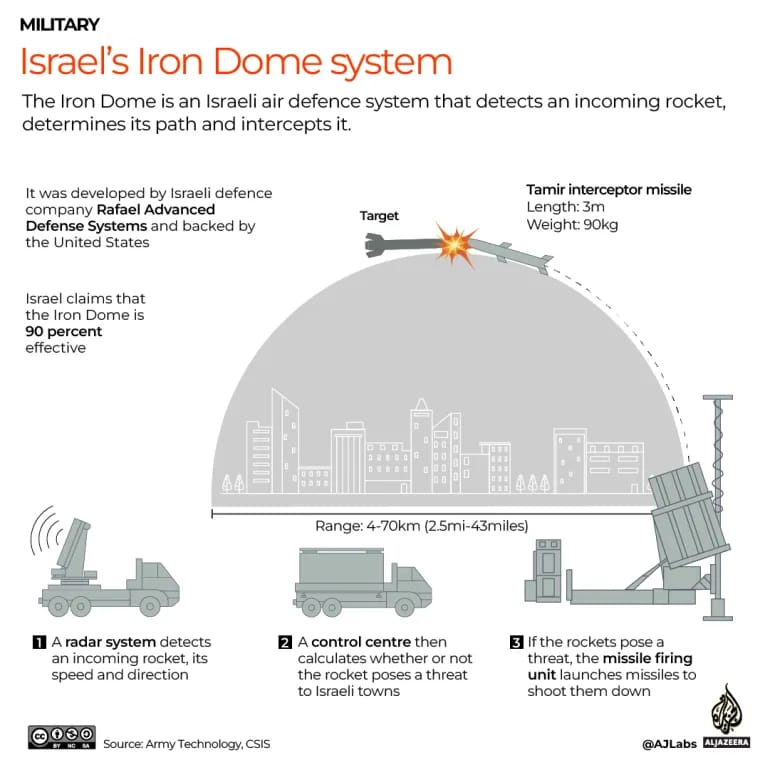

The Iron Dome is Israel's short-range air defense system. It went live in 2011. Since then it has fired tens of thousands of interceptor missiles at incoming rockets, mortars, and artillery shells. Some sources put its success rate above 90% for threats it engages.

The Iron Dome does not try to stop every rocket. Each interceptor missile costs somewhere between $50,000 and $100,000. The rockets it shoots down often cost a few hundred dollars to build. If the system tried to engage every threat, the math would bankrupt the country in a week.

The Iron Dome is a defensive system and is less controversial than an AI-driven offensive attack system.

So the system has to choose. Each incoming rocket gets tracked the moment its trajectory clears its launcher. The Iron Dome’s radar computes where it's going to land. If the landing zone is a parking lot or an empty field, the Iron Dome lets it fall. If the landing zone is a hospital or an apartment building, the system commits an interceptor.

This decision happens in milliseconds. There is a human in the loop, technically. But the human sees a screen that says "engage" and presses a button, or the system engages on its own under pre-authorized rules of engagement. The trajectory math, the threat classification, the cost-benefit, all of it is software doing what no human could do at that speed.

Now ask the harder question. Who decided which neighborhoods get defended and which do not?

Who wrote the rule that says a strike on a school triggers an interceptor but a strike on an empty parking lot does not?

That decision was made in a conference room, years ago, by engineers, military officers, and lawyers. They wrote it into the software. The AI runs that rule a thousand times a day.

When people say "an AI made the decision," what they usually mean is that an AI ran a rule somebody else wrote. The rule was written by humans. The AI provides the speed. The interesting question, every time, is who wrote the rule.

That sounds obvious until you try to apply it. Most rules built into AI systems are not written down anywhere a normal person can read them. They are buried in computer code and training data. You see the result, but do not see the rule.

During the Gaza war in 2014, the Iron Dome intercepted 100+ projectiles per week

For the Iron Dome, the rule is protect civilian lives, accept that interceptors are expensive, accept that some rockets will land where we did not defend. Most people, looking at that trade-off, would write the same rule.

The Iron Dome is the easy case. The threat is rockets fired at civilian areas. The calculations are straightforward and the cost-benefit is clear. A good situation for AI to drive decisions.

Other AI systems are running rules in situations that are not as clear as the “see the rocket, fire the interceptor” logic of the Iron Dome.

What about the AI-driven loan denial because you were late on your car payment last month? The AI runs the rules and does not care that you were hospitalized for two weeks and did not catch up on your bills until you were home.

In the loan-approval example, the AI makes the process more efficient, but it did not make it better because the underlying data are not a clear cut as the data used for the launch decision made by the Iron Dome.

Don’t get mad at the Iron Dome if your house gets hit with an interceptor, or the AI loan-approval process bounces your application. Get mad at the people that set the rules.

Things I think about

The Amazon River discharges so much freshwater that you can drink from the ocean 100 miles offshore.

**********